I mentioned a while back (see list at appendix A) some of the philosophical as well as practical problems societies around the globe will have to address as our robot slav- I mean, zero-leave helpers take-on more tasks once done exclusively by humans. As the power of technology grows and both economic, legislative and environmental demands increase the pressure for faster roll-outs, so we face one of our first tests in our new roles as demi-gods – telling our creations (in this case driverless cars) who to kill in a life-and-death situation...

Nightmare scenario #1

The scenario I have in mind has already explored in the film ‘I, Robot’ (a poor take on some fab Isaac Asimov books), which concerns a sudden incident where there are limited choices – all of them nasty:

- The vehicle is crossing a bridge over a busy motorway at some speed with several passengers on board.

- For some reason, it begins to lose control and there is no time to brake although there is just time to choose a direction (left or right) to use the barriers to come to a halt. Continuing straight is not an option.

- However, on the left is a small child, on the right is an old person. Crashing onto the motorway would involve innumerable injuries and almost certainly multiple casualties. As well as a traffic jam.

So, which of the two humans should the AI choose to die? This is one of those choices which has a lot of subtlety to it. Even when humans make the same choices, they all bring many and varied sets of ‘built-in rules’ to play on the gut decision.

Getting the points(s)

We already have many rather less deadly processes which are rules-based. Back in the 1980s I had the dubious pleasure of working in a Local Authority Housing Department. For many decades, these places ran with quite minimal rules – how long were you on the list, were you a good tenant (no rave parties, police raids etc, as well as paying the rent) were very important. Children were also a factor, although the ability simply to breed uncontrollably with or without a legal partner was not in itself significant.

Then in the late 70s our political masters began to get involved and wanted to be seen to be doing something for all manner of different social groupings (well, ones which vote). They thus began to introduce what rapidly became huge lists of different factors – over 1600 by the time I left - each of which attracted a tiny, tiny points score, with the maximum scores getting a place to live. Of course, they didn’t build more houses – most LAs had a net loss of housing stock as the good tenants jumped on the Right To Buy (votes) bandwagon – so the overall practical effect was minimal; 2pts for this or that factor when you need 2000+ on average for a flat…? But it made the Council feel good and more importantly abrogated some responsibility (for not getting a place) to ‘the system’.

Human Rights vs local democracy

So, back to our crashing car and the poor AI system. Someone human will therefore need to develop a similar ‘points list’ of factors it must consider – youth vs age, always go right (on Thursdays) etc. But it gets better… Such lists may well vary by location – in certain societies, a woman is still sadly seen as less ‘valuable’ than a male? Or certain persons in the Deep South of the USA? And can the relatives of the chosen target sue the car company? Or the DVLA?! Indeed, should we allow for such variation in ‘plug-in morality’, given the prevalence of global Human Rights in any state with a functioning justice system.

And it won’t stop there. As information flow speeds-up, it could allow various AI Morality Committees to add yet more factors…. What if the young person’s medical records were instantly to hand (5G anyone) and they had an incurable, wasting disease with only a 7.54% chance (according to the NHS AI) of reaching their next birthday, whereas the 60-something was a fit and active ex-veteran? Unless of course the older one also had a rare blood group and healthy liver (which was likely to survive the impact), which is what that patient in the hospital just down the road is waiting for? Or if the old person was the Queen? Or had been to prison for - what? Or we decide to balance-out all road deaths according to population demographics to be ‘fair’…

Over to fate 2.0

Of course, you could simplify matters and just run over whoever is wearing the Millwall shirt! Or we could simply say we do not want to play at this bit of being demi-gods and get the AI to just flip a logical coin and leave it up to what we call ‘fate’…. And microprocessor designers. Now where’s that article on random-number theory and built-in chip bias….? But really – can you think of a politician who wouldn’t want to get their name on creating a list of such factors (at least until the first bodies arrive in the morgue).

When it is us behind the wheel, our brains run through our personal list of built-in experiences and prejudices in milliseconds. Plus, we can always tell ourselves later that we had a black-out, or cannot remember why we chose the one we did, even if we know; humans are good at that (it’s a survival trait). But with our AI car, it’s our deliberate choice beforehand – and we shall have to learn to live with that.

Appendix A

Other Optima AI-related articles:

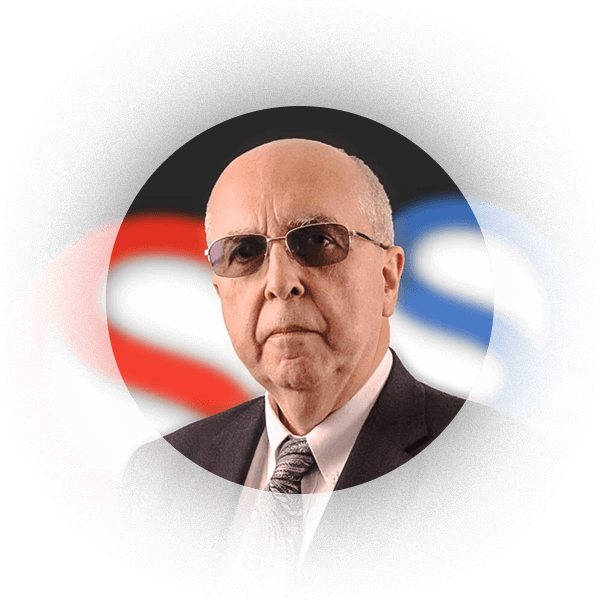

Senior Consultant

Peter is one of our senior consultants and has many years of programming and software consultancy to call upon, having ‘seen the light’ and changed careers from the Public Sector back in the early 1980s when computers less powerful than your watch filled a room! More about Peter.

Ask Peter about APL / APL Consultancy / APL Legacy System Support

- First thoughts on Evolutionary Programming

- Smart speakers – justified paranoia or am I just behind the times?

- The Mysterious Case of the ‘Engine-Stopping Ray’

- Fortunate Correlations

- Neural Networks and APL

- My First Exam with Global Knowledge Apprenticeships

- Out of the office…well my usual one at least!